MyBatis is a first class persistence framework with support for custom SQL, stored procedures and advanced mappings. MyBatis eliminates almost all of the JDBC code and manual setting of parameters and retrieval of results.

MyBatis can use simple XML or Annotations for configuration and map primitives, Map interfaces and Java POJOs (Plain Old Java Objects) to database records.

MyBatis makes it easier to build better database-oriented applications more quickly and with less code.

SQL server

I am using Microsoft SQL Server 2017 in my example, but you are free to use any other SQL server.

I have Microsoft SQL Server 2017 installed on RHEL 7. The installation and configuration was a matter of just a couple of minutes. Here is what I did.

Configure the repository

sudo curl -o /etc/yum.repos.d/mssql-server.repo https://packages.microsoft.com/config/rhel/7/mssql-server-2017.repo

Install the server

sudo yum install -y mssql-server

Configure the server

sudo /opt/mssql/bin/mssql-conf setup

If you want to use a different port

sudo /opt/mssql/bin/mssql-conf set network.tcpport 1433

Enable and start the service

systemctl enable mssql-server

systemctl status mssql-server

Configure your firewall and reload the settings

sudo firewall-cmd --zone=public --add-port=1433/tcp --permanent

sudo firewall-cmd --reload

To access the server, you can use Microsoft SQl Management Studio, which has about 900 MB, or a more lightweight solution like SQuirrel

Database and Table

For my example, I created a database eknori and a table users. The table is dead simple with only an id, firstname and lastname for a user.

CREATE TABLE [dbo].[users](

[id] [varchar](50) NOT NULL,

[firstname] [varchar](max) NULL,

[lastname] [varchar](max) NOT NULL,

) ON [PRIMARY] TEXTIMAGE_ON [PRIMARY]

myBatis

With SQl server running, we can now start to create our project to work with the database.

You can download the source code here.

Since we are communicating with the database, we have to configure the details of the database. config.xml is the file used for the XML-based configuration. By using this file, you can configure various elements.

<?xml version="1.0" encoding="UTF-8"?>

<!DOCTYPE configuration PUBLIC "-//mybatis.org//DTD Config 3.0//EN" "http://mybatis.org/dtd/mybatis-3-config.dtd">

<configuration>

<typeAliases>

<typeAlias alias="User" type="de.eknori.sql.model.User"/>

</typeAliases>

<environments default="development">

<environment id="development">

<transactionManager type="JDBC"/>

<dataSource type="POOLED">

<property name="driver" value="com.microsoft.sqlserver.jdbc.SQLServerDriver"/>

<property name="url" value="jdbc:sqlserver://YourServer:1433;databaseName=eknori"/>

<property name="username" value="sql-user"/>

<property name="password" value="sql-pwd"/>

</dataSource>

</environment>

</environments>

<mappers>

<mapper resource="de/eknori/sql/mybatis/mapper/User.xml"/>

</mappers>

</configuration>

Within the environments element, we configure the environment of the database that we use in our application.

In MyBatis, you can connect to multiple databases by configuring multiple environment elements. To configure the environment, we are provided with two sub tags namely transactionManager and dataSource.

- JDBC, the application is responsible for the transaction management operations, such as, commit, roll-back, etc…

- MANAGED, the application server is responsible to manage the connection life cycle. It is generally used with the Web Applications

The dataSource tag is used to configure the connection properties of the database, such as driver-name, url, user-name, and password of the database that we want to connect.

Instead of specifying the absolute class name everywhere, we can use typeAliases, a shorter name for a Java type.

The mapper element is used to configure the location of mapper xml files, which contain the mapped SQL statements. Our file is User.xml.

<?xml version="1.0" encoding="UTF-8"?>

<!DOCTYPE mapper PUBLIC "-//mybatis.org//DTD Mapper 3.0//EN" "http://mybatis.org/dtd/mybatis-3-mapper.dtd">

<mapper namespace="User">

<resultMap id="result" type="User">

<result property="id" column="id"/>

<result property="firstname" column="firstname"/>

<result property="lastname" column="lastname"/>

</resultMap>

<select id="selectAll" resultMap="result">

SELECT * FROM users;

</select>

<select id="selectById" parameterType="int" resultMap="result">

SELECT * FROM users WHERE id = #{id}

</select>

<insert id="insert" parameterType="User">

INSERT INTO users (id, firstname, lastname) VALUES (#{id},#{firstname}, #{lastname});

</insert>

<update id="update" parameterType="User">

UPDATE users

SET firstname = #{firstname}

SET lastname = #{lastname}

WHERE id = #{id}

</update>

<delete id="delete" parameterType="int">

DELETE from users WHERE id = #{id}

</delete>

</mapper>

This configuration abstracts almost all of the JDBC code, and reduces the burden of setting of parameters manually and retrieving the results.

We only need some simple methods and POJO to send and receive data to and from the database.

Here is our User object.

package de.eknori.sql.model;

public class User {

private int id;

private String firstname;

private String lastname;

public int getId() {

return id;

}

public void setId(int id) {

this.id = id;

}

public String getFirstName() {

return firstname;

}

public void setFirstName(String name) {

firstname = name;

}

public String getLastname() {

return lastname;

}

public void setLastname(String lastname) {

this.lastname = lastname;

}

@Override

public String toString() {

return "id: " + id + " FirstName: " + firstname + " LastName: " + lastname;

}

}

userDAO contains the methods that use the mapped SQL statements from User.xml

package de.eknori.sql.dao;

import java.util.List;

import org.apache.ibatis.session.SqlSession;

import org.apache.ibatis.session.SqlSessionFactory;

import de.eknori.sql.model.User;

public class UserDAO {

private SqlSessionFactory sqlSessionFactory = null;

public UserDAO(SqlSessionFactory sqlSessionFactory) {

this.sqlSessionFactory = sqlSessionFactory;

}

public List<User> selectAll() {

List<User> list = null;

SqlSession session = sqlSessionFactory.openSession();

try {

list = session.selectList("User.selectAll");

} finally {

session.close();

}

return list;

}

public User selectById(int id) {

User user = null;

SqlSession session = sqlSessionFactory.openSession();

try {

user = session.selectOne("User.selectById", id);

} finally {

session.close();

}

return user;

}

public int insert(User user) {

int id = -1;

SqlSession session = sqlSessionFactory.openSession();

try {

id = session.insert("User.insert", user);

} finally {

session.commit();

session.close();

}

return id;

}

public void update(User user) {

@SuppressWarnings("unused")

int id = -1;

SqlSession session = sqlSessionFactory.openSession();

try {

id = session.update("User.update", user);

} finally {

session.commit();

session.close();

}

}

public void delete(int id) {

SqlSession session = sqlSessionFactory.openSession();

try {

session.delete("User.delete", id);

} finally {

session.commit();

session.close();

}

}

}

Finally we have ConnectionFactory that reads config.xml and establishes a connection to the database.

package de.eknori.sql.mybatis;

import java.io.FileNotFoundException;

import java.io.IOException;

import java.io.Reader;

import org.apache.ibatis.io.Resources;

import org.apache.ibatis.session.SqlSessionFactory;

import org.apache.ibatis.session.SqlSessionFactoryBuilder;

public class ConnectionFactory {

private static SqlSessionFactory sqlSessionFactory;

static {

try {

String resource = "de/eknori/sql/mybatis/config.xml";

Reader reader = Resources.getResourceAsReader(resource);

if (sqlSessionFactory == null) {

sqlSessionFactory = new SqlSessionFactoryBuilder().build(reader);

}

} catch (FileNotFoundException fileNotFoundException) {

fileNotFoundException.printStackTrace();

} catch (IOException iOException) {

iOException.printStackTrace();

}

}

public static SqlSessionFactory getSqlSessionFactory() {

return sqlSessionFactory;

}

}

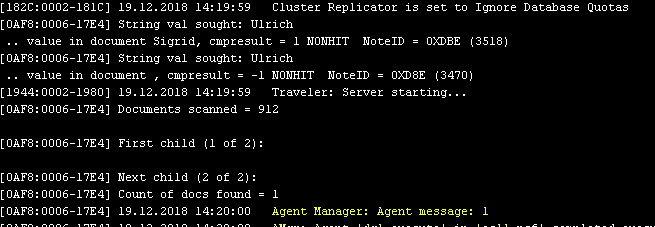

And, action!

We are now ready to read and write data from and to the database

package de.eknori.sql;

import java.util.List;

import de.eknori.sql.dao.UserDAO;

import de.eknori.sql.model.User;

import de.eknori.sql.mybatis.ConnectionFactory;

public class Main {

private static User user = null;

private static int id = 999;

public static void main(String args[]) {

UserDAO userDAO = new UserDAO(ConnectionFactory.getSqlSessionFactory());

List<User> users = userDAO.selectAll();

users.forEach(element -> System.out.println(element));

userDAO.delete(id);

user = new User();

user.setId(id);

user.setFirstName("Ulrich");

user.setLastname("Krause");

userDAO.insert(user);

user = userDAO.selectById(id);

System.out.println(user);

}

}

That is easy, isn’t it?

myBatis has more options to configure your project like Annotations and Dynamic SQL, but I found the configuration via .xml mapper files the easiest one to start with.

If you want to use other SQL servers instead, you only have to configure config.xml accordingly and download and import the correct driver.

Following part 1 – 5 of the Electron tutorial you’re probably ready with the design, preparation and debugging of your app.

Following part 1 – 5 of the Electron tutorial you’re probably ready with the design, preparation and debugging of your app.